Using math to battle hearing loss

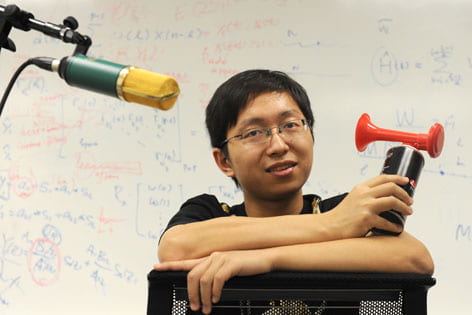

Doctoral student Meng Yu is using his academic skills to help the hearing-impaired. He is fine-tuning a set of mathematical computer instructions that pulls apart overlapping voices so a listener can hear each of them distinctly.

As a child, Meng Yu struggled with his grandfather’s hearing loss.

“He would talk with me about Chinese history and literature, but responding to him was tough because I had to speak loudly. He didn’t wear a hearing aid,” says Yu, a mathematics doctoral candidate at UC Irvine.

Today Yu is using his academic skills to help the hearing-impaired. He is fine-tuning a set of mathematical computer instructions that pulls apart overlapping voices so a listener can hear each of them distinctly.

He hopes to eventually apply this blind-speech separation algorithm to hearing aids, which amplify sound but don’t allow the user to choose and clearly hear one voice in a noisy setting.

“I don’t think amplification is enough,” Yu says. “They should have the ability to distinguish speech, like our ears and brain can do.”

More than 31.5 million people describe themselves as having difficulty hearing, according to the U.S. Equal Employment Commission. A recent study found that combat troops often suffer hearing loss. The Department of Veterans Affairs notes this as a frequent disability.

Research into blind-speech separation began about 20 years ago. Early algorithms were tailored for use in rooms without furniture (which deflects sound) or background noise (such as from an air conditioner). They also worked slowly, separating voices in a few seconds, rather than milliseconds.

“If it takes a long time to compute, there may be a large delay for the listener to hear the sound. For hearing aids, the separation must occur in real time,” says Yu, who is trying to make his algorithm simple and quick enough for the devices.

The technology might also be applied to electronic communications, such as teleconferencing, to help people without impairment better hear a specific voice.

“Meng’s work holds great promise and could impact many lives – from baby boomers to war veterans,” says mathematics professor Jack Xin. “He is a creative, imaginative and productive researcher.”